Generative AI 101 Guide

The most important advantage humans have over other species is our ability to adapt to any situation. Another thing we are good at is our ability to analyze, reason and solve problems. Even thousands of years ago, we were dreaming of mechanisms that could be considered the predecessors of artificial intelligence, mechanisms that could perform tasks beyond the natural limits of human power. In Greek mythology, Talos was a giant bronze statue tasked with protecting the island of Crete and was described as a thinking mechanism that could move automatically.

With the launch of OpenAI's Generative AI product ChatGPT in December 2022, we are experiencing an AI revolution in every field. Never before in history has AI been so accessible to humanity in general. Artificial intelligence, which for a long time only made sense to sci-fi fans and scientists, is now a big part of our daily lives, especially in business. For example, Generative AI can create a presentation on consumer behavior for us from start to finish, right before a brainstorming meeting for the social media campaign of our new product.

Analytical AI, or traditional artificial intelligence, has been quietly making its way into our lives for a long time. The next video TikTok shows us is completely determined by artificial intelligence. Machines can now easily understand whether a visitor is a real user or a bot from the behavior of users coming to a site. It is almost impossible to analyze large data sets without the support of artificial intelligence.

However, until recently, we didn't think that AI could compete with humans in any way in areas that require creativity. We were using AI more as cognitive labor for analysis and big data-driven work. The introduction of Generative AI has fundamentally changed our perspective on both AI itself and the potential we can achieve with AI.

The era of productive AI is just beginning and it will take time for the benefits of this new technology to be fully realized. Competitive advantage is predicted to shift to organizations that are the first to integrate productive AI into their innovation, company growth and workflow processes. A McKinsey study conducted in the US in mid-April 2023 shows that productive AI is on the board agenda of 28% of the organizations to which respondents belong. One-third of organizations are using productive AI in at least one business function.

What is Generative AI?

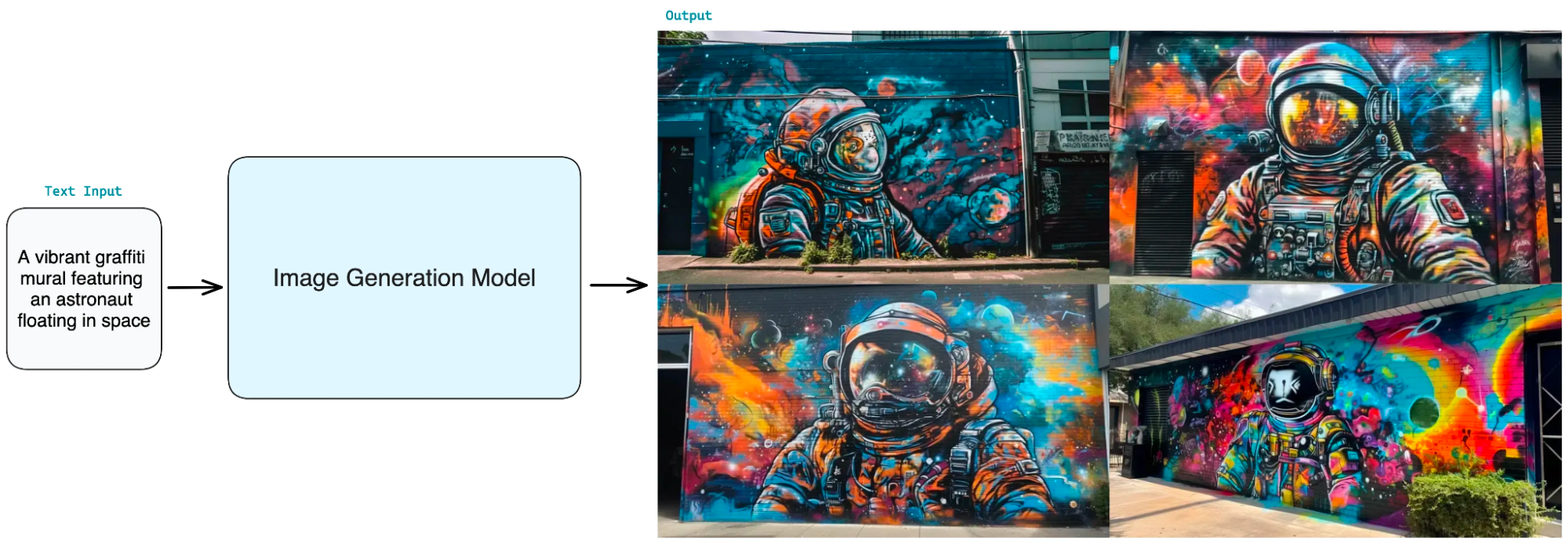

Generative AI, or Generative Artificial Intelligence, can be defined as a subset of artificial intelligence that can create artifacts that mimic various aspects of human creativity and intelligence. Generative AI refers to AI techniques and models that can fulfill the function of generating original content such as product design, image generation, copywriting, audio generation, code generation, etc.

Generative AI starts with a prompt, which can take the form of a text, image, video, design, musical score or any other input that the AI system can process. Various AI algorithms then create new and original content in response to this prompt.

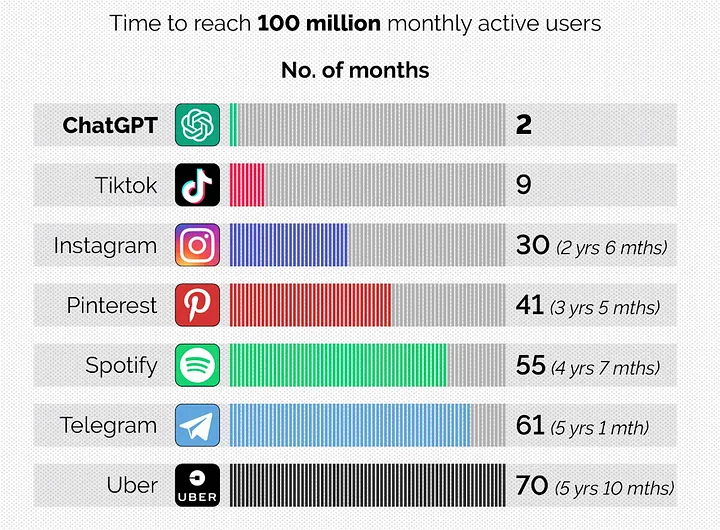

Open AI's launch of Chat GPT, the most ubiquitous productive AI application, or interface, of our time, is a major technological development that for some is almost a watershed moment. With the number of users growing at a rate never seen before on any online platform, ChatGPT has officially revolutionized the market for AI applications. This revolution has touched and influenced all sectors from healthcare to entertainment in one way or another. The potential of Generative AI technologies is too big for any business to ignore. ChatGPT reached 100 million active users in just 2 months.

What Does Generative AI Do?

The greatest power of generative AI is its ability to generate new ideas, designs and solutions to problems that humans have never thought of before. All these processes can be used to support creative processes in areas such as art, design, written content production and engineering.

Generative AI can also significantly increase efficiency and productivity in many industries. Repetitive and time-consuming tasks can be automated with Generative AI, freeing up time and resources for more important tasks. For example, instead of manually creating unique text for each product, an e-commerce company can have generative AI generate product descriptions based on the products' features. This way, marketing teams only have to check product descriptions and can spend the rest of their time on more creative and strategic tasks.

At the same time, it may be possible to make better business decisions with AI support. For example, businesses can use generative AI to uncover data that will guide their decision-making process on marketing strategies or developing a new product.

Generative AI can produce personalized content by understanding individual preferences. For example, in the fashion industry, generative AI can be used in personalized clothing design processes. Generative AI can increase customer satisfaction and loyalty by producing personalized solutions in this way.

Productive AI can also play an inspiring role in supporting creative workflows, accelerating creative processes. In areas such as design, generative AI tools can help creative professionals work more efficiently.

Generative AI Applications

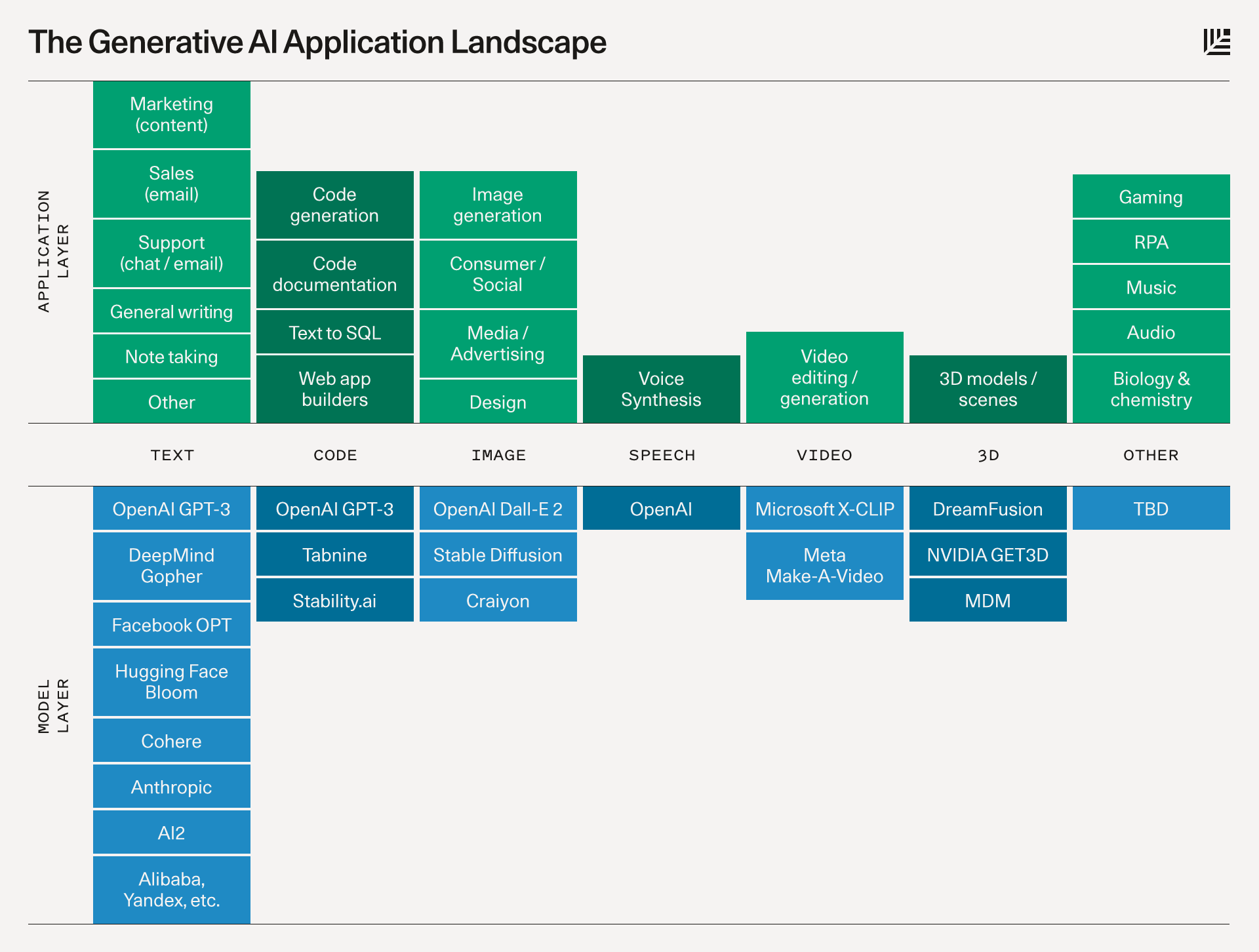

At this point, it is worth mentioning the difference between Generative AI and Generative AI interfaces. Generative AI, as you know, refers to AI models that can generate content in text, images, audio and other formats. For example, models like GPT-4 can generate text, while DALL-E can generate images. Generative AI interfaces and applications are actually tools that work based on models like GPT-4 and make these models accessible in a more user-friendly way.

This way, any user can use powerful AI models without having to deal with technical details. For example, ChatGPT is actually a generative AI application that uses GPT-3 and GPT-4 models.

Some productive AI models directly allow users to use their own interface. For this reason, models and interfaces are sometimes used synonymously. We can say that some of the applications utilize open source code in the background and thus make it accessible to developers through APIs.

Especially with the proliferation of no-code or no-code applications, it is now possible for businesses to create artificial intelligence applications that will produce solutions to the problems they face in their business processes. Simply put, companies that use applications such as Discord or Slack for internal communication can design bots that will facilitate their workflows without much effort. Or you can create your own AI content application by training open source applications on your own company policies. You can design personalized assistants for your customers that go one step beyond Chatbot. Using only apps like Google Sheets as an interface, you can automate a task that you used to spend hours on. The first step is just understanding the potential of AI and imagining an application that you can create using AI models.

We can summarize some of the AI interfaces we hear a lot about, which are products in their own right, as follows. Compared to the power of what can be done with productive AI models, these applications are only a small part of the ecosystem. We plan to discuss these applications in more detail within different contents.

- Content production: GPT, Jasper, AI-Writer and Lex.

- Image production: Dall-E 2, Midjourney and Stable Diffusion.

- Music production: Amper, Dadabots and MuseNet.

- Code generation: CodeStarter, Codex, GitHub Copilot and Tabnine.

- Audio production: Descript, Listnr and Podcast.ai.

- AI chip design: Synopsys, Cadence, Google and Nvidia.

Here we can see how the application and model layers interact with each other in generative AI

How Generative AI Works?

Unlike traditional AI systems, generative AI uses advanced deep learning models and big language models (LLMs) to learn patterns or patterns from its data. The trained model then generates new content using the learned patterns and structures.

For example, a generative AI system may be presented with a large number of images of human faces. After discovering patterns in all these images, such as facial features, outlines, skin color, etc., the generative AI can create new faces that do not belong to any human in the world.

Levis used models created entirely by AIs for product images on its e-commerce site.

Early versions of generative AI required data transfer through an API or some other complex process. Developers had to know about these specialized tools and write intermediate applications using languages like Python. Today, AI pioneers are building better user experiences that allow us to describe a request with language alone. After Generative AI's initial response, we can customize the output, getting feedback on the style, tone and other elements of the generated content.

The first stage of developing a generative AI starts with collecting and processing a large data set that is diverse in the type of data you want to create. For example, if you have a dream of building a Generative AI product related to textual content creation, you need to compile a dataset of various texts. The next step is to decide which generative AI model to use.

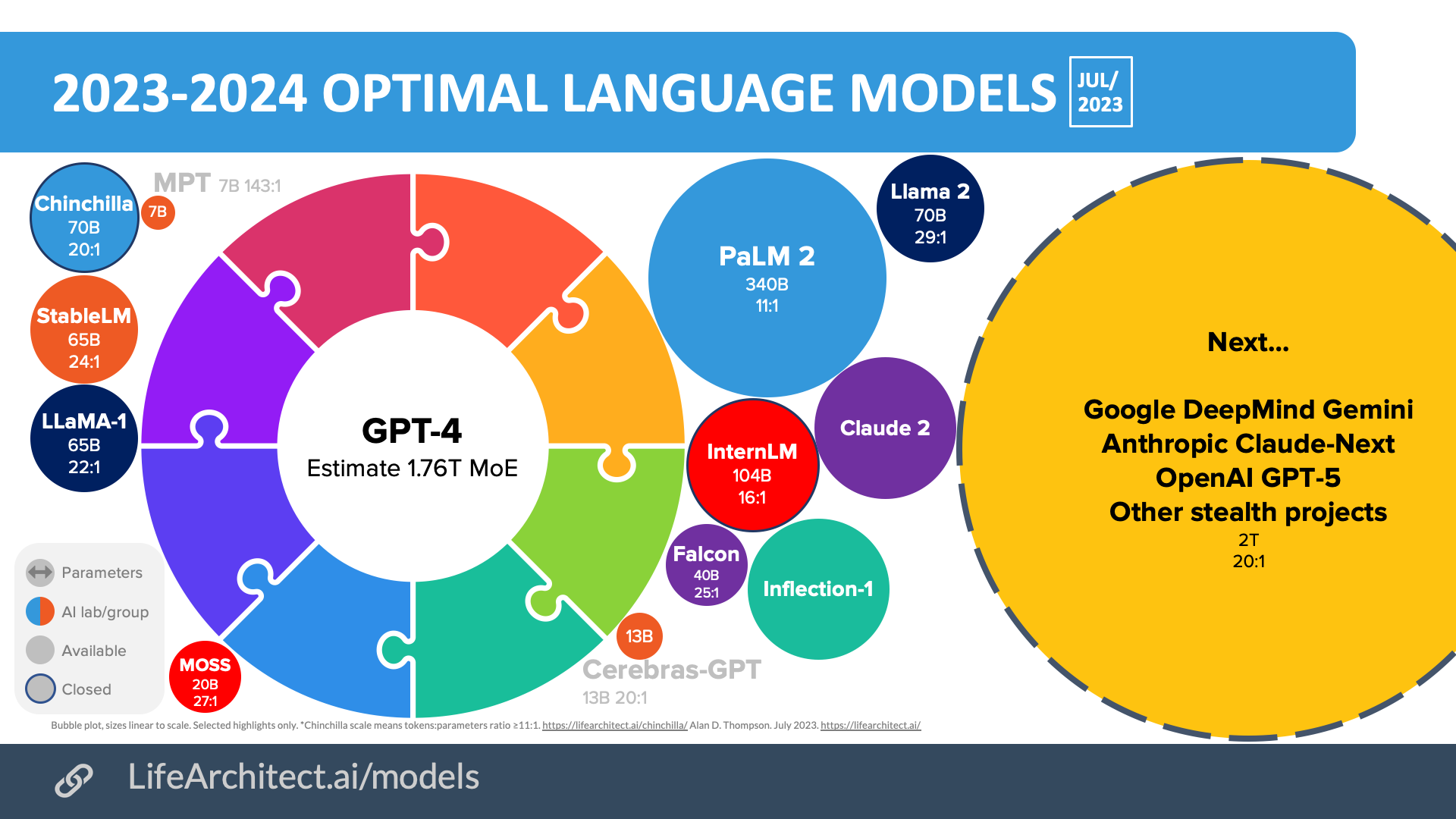

Generative AI Models

In the field of Generative AI, both open-source communities and big companies have been investing heavily for the past years. All these advances are revolutionizing areas such as natural language processing. At the same time, growing datasets and advances in computer technology are enabling larger and more sophisticated models to emerge.

Language model ecosystem as of July

Let's take a closer look at a few of the most popular generative AI models, with a focus on the technical aspects. Let's dream about the products that can be created with generative AI.

1. Transformer Based Models

Transformer-based models are one of the key architects of the rapid development of artificial intelligence in recent years. Transformer-based models, which first appeared in a paper published by Google in 2017, can be defined as powerful neural networks that learn context and therefore meaning by tracking the relationships between ordered data such as words in a sentence. Transformer-based models are particularly good at natural language processing (NLP) tasks. The best known examples of transformers are GPT-4, BERT and LaMDA.

GPT-4, the most frequently used converter since the integration of ChatGPT into our lives, can write poetry, create emails from scratch and even tell jokes.

Similarly, BERT is another transformer-based model that has revolutionized language processing. The most important feature of BERT is that it can move without depending on word order when learning texts. This actually differentiates it from traditional transformer models.

LaMDA is a family of language models based on Google Transformer, an open source neural network architecture for natural language understanding.

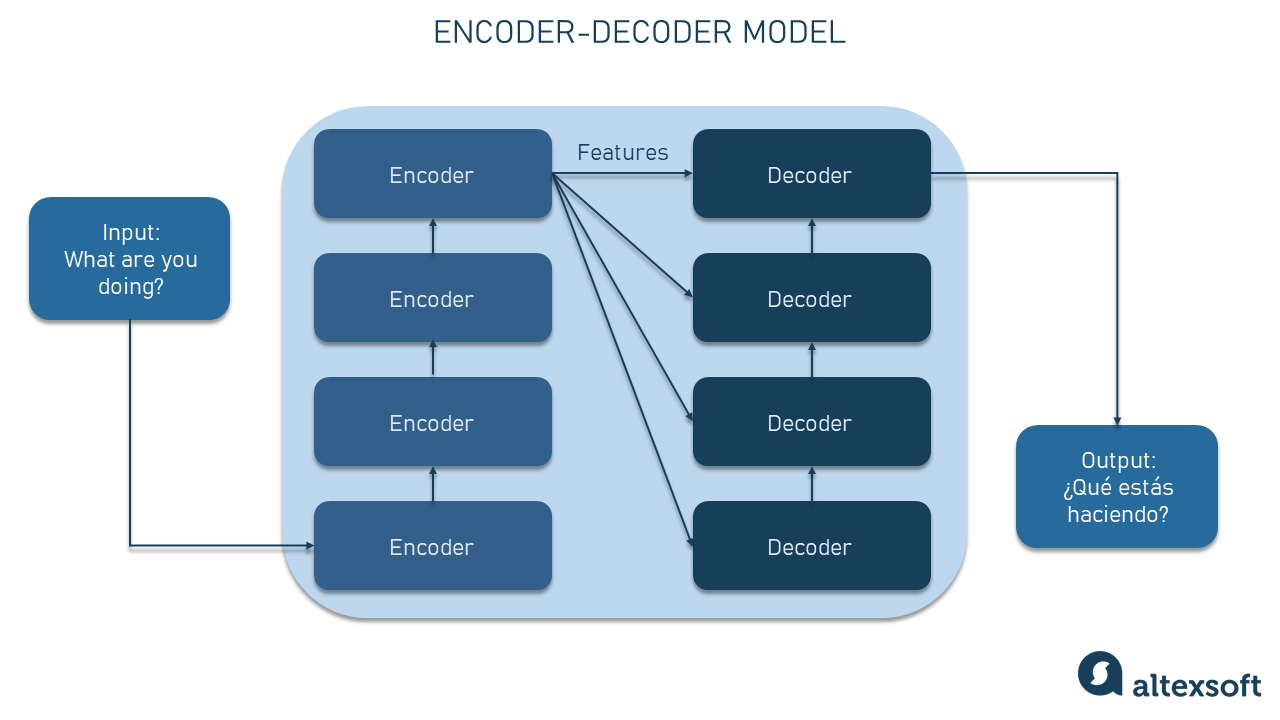

Converter models work by combining encoder and decoder components. These concepts are used to break large and complex text processing tasks into more understandable and doable pieces.

For example, when translating, the transducer model uses an encoder to make sense of sentences in the source language. The decoder, or decoder, uses the information from the encoder to create the target language sentence.

2. Generative Adversarial Networks(GAN’s)

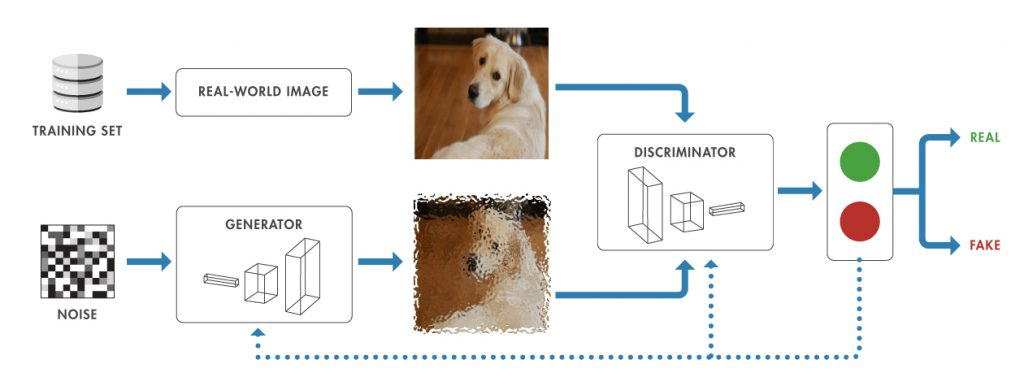

GANs are an artificial intelligence model consisting of two different neural networks. The two networks work on the principle of competing with each other and forcing each other to improve at the same time. One side aims to generate realistic data samples, while the other aims to distinguish between real data and data generated by artificial intelligence.

GANs are widely used, especially in image and video production. The best known examples are Midjourney and DALL-E.

3. Variational Autoencoders (VAE’s)

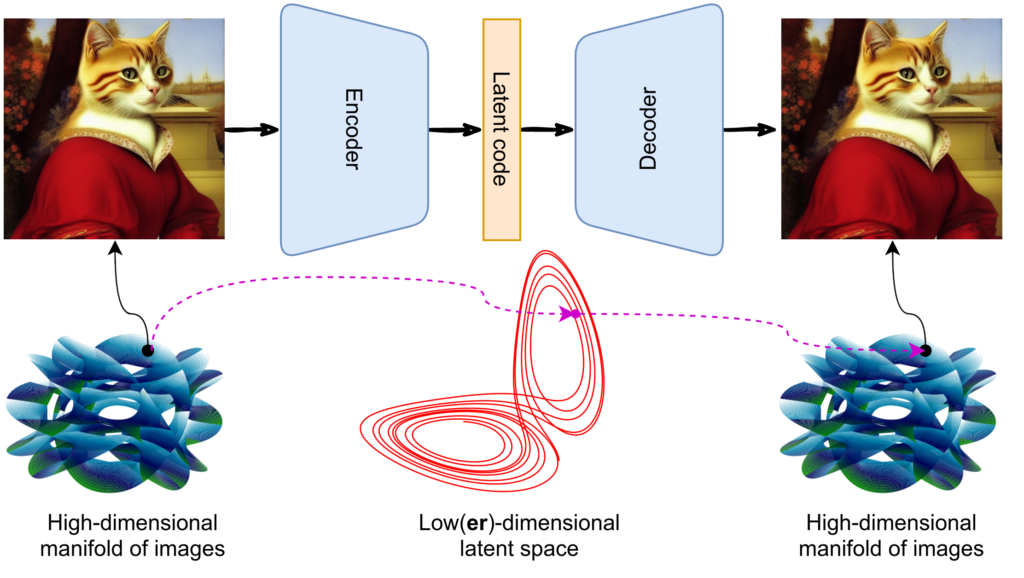

As with GANs, VAE consists of two main components, the encoder and the decoder. The encoder converts the input into a more compressed form. For example, when it is presented with an image, it takes only the most important features of the image, capturing its essence and placing it in what is called "latent space". The decoder takes the compressed information and uses it to reconstruct the original data. In other words, it can transform the compressed information back into the original image or data. VAEs can be used in a wide range of areas such as realistic image production, data compression and anomaly detection.

4. Diffusion Based Models

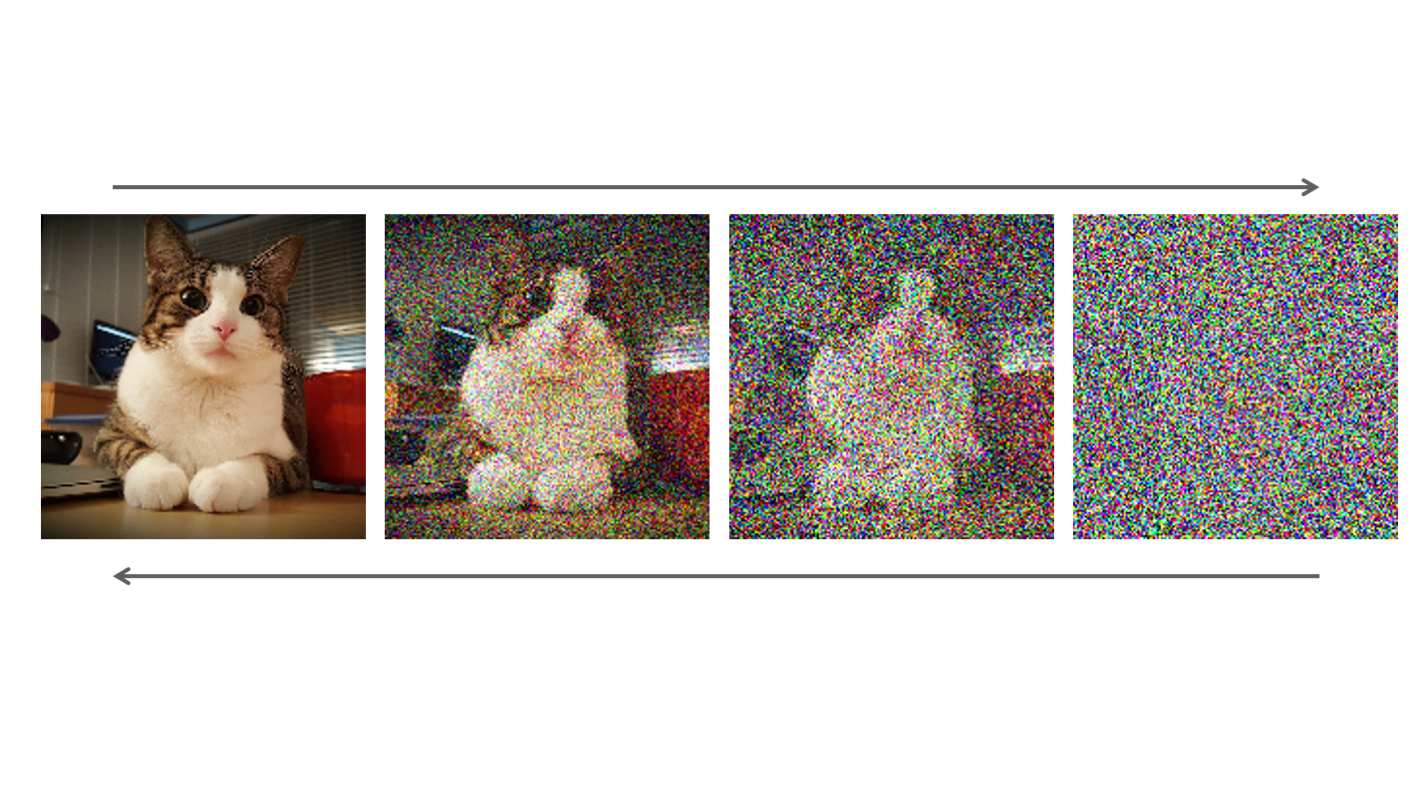

Diffusion-based models try to model data that in a way "evolves". For example, let's say all the pixels of the data we present to the model have random colors. Diffusion models calculate how these colors should change over time, making the picture more meaningful over time. When a diffusion model is presented with an unclear image of a cat, it can predict the clear image and use this information to get a cleaner image. This model is often used in image processing.

History of Generative AI

Today, with the advent of productive AI models and open-source access to these models, there are many productive AI applications coming to market every day. As with the boom period of any new technology, some of these applications are here to stay, while others are fast disappearing from the shelves. But if we want to understand where AI is heading, we need to take a quick look at the recent past.

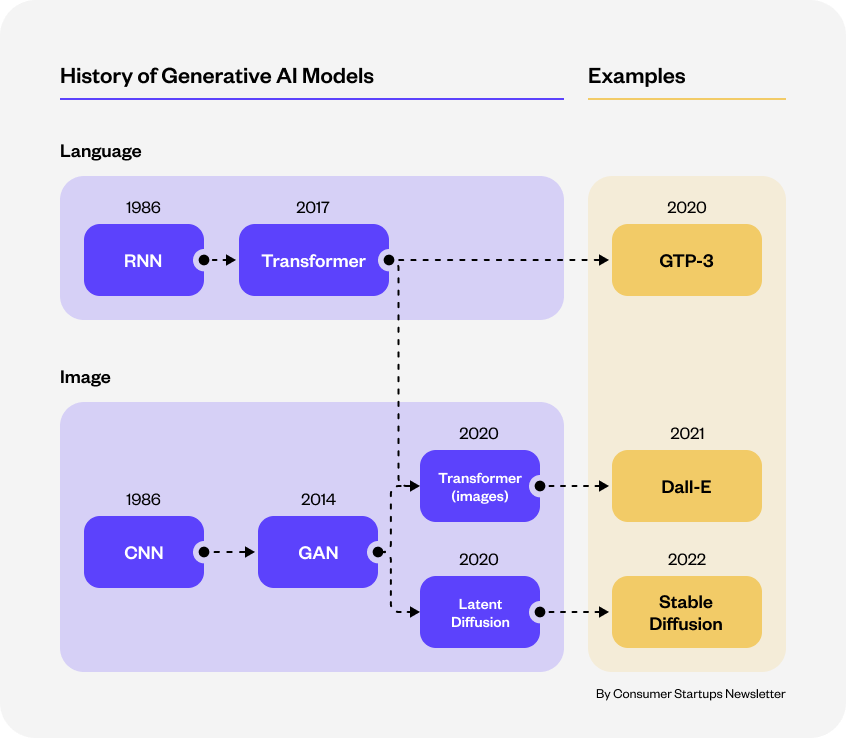

Historical development of generative ai models

1950s

It was 1950 when Alan Turing, the father of computer science, wrote the first academic paper on thinking machines. Before then, artificial intelligence had never progressed beyond a concept, as computers could only execute commands but could not memorize them.

1980s

In the 1980s and beyond, developments in the field of neural networks began to emerge, fueling progress in the field of generative artificial intelligence. Researchers demonstrated the ability to create data arrays by developing models such as recurrent neural networks (RNNs).

2010s

In the 2010s, deep learning took productive AI to a very different place thanks to its increasing computational power. VAEs were also used for the first time in this period.

In 2014, thanks to the efforts of Ian Goodfellow and his colleagues, GANs became available. At the time, small-scale generative AI models were considered cutting-edge technology, especially in language understanding. These models took on more analytical tasks. However, creating code at a level that mimicked human creativity was still a dream.

2020s

As the models grew, productive AI began to deliver output at the level of a standard human, then superhuman. Between 2015 and 2020, the computing capacity used to train models increased 6-fold. The earliest productive AI applications are becoming accessible, albeit only to a small audience. Most AI applications have never advanced beyond closed beta.

Over time, better computers became even more affordable. After 2022, the cost of training and interfacing new models, such as diffusion, dropped rapidly. Correspondingly, better algorithms and larger models started to emerge. Developers were able to move from closed beta to open beta or even directly to open source. In fact, for the first time, LLMs became accessible for the majority of developers. The number of productive AI applications began to proliferate rapidly.

Generative AI Application Areas

1. Marketing and Advertising

Generative AI supports businesses in creating personalized advertising and marketing content, original social media images and videos.

2. E-Commerce

Generative AI can be used in many areas, from analyzing past sales data to predict future demand to analyzing product reviews to offer insights into customer trends.

3. Text Production

Writers can use Generative AI to get inspiration or create drafts for their stories, articles, poems, etc. In particular, processes such as creating blog or web page content can be made much faster with Generative AI.

4. Music and Sound Production

Generative AI is actively used to generate new music tracks, lyrics or sound effects. At the same time, technologies such as converting written text into audio without the need for human intervention are rapidly becoming widespread. This allows you to produce podcast content very quickly, for example.

5. Game Development

Generative AI can be used to create worlds in video games, especially non-player characters. This allows game developers to provide users with a richer and more realistic gaming experience.

6. Health and Medicine

Generative AI is actively used in areas such as disease diagnosis, personalized treatment, molecular design, medical imaging and drug development.

7. Architecture and Urban Planning

Productive AI can be used for the design, planning and layout of new buildings.

8. Training and Training Material

It is possible to have generative AI generate learning materials and educational content to provide personalized learning experiences for students.

9. Finance

Productive AI algorithms can analyze vast amounts of financial data to detect data anomalies that signal fraud.

10. Cybersecurity

Productive AI is revolutionizing cybersecurity by accurately assessing threats and vulnerabilities.

The Future of Generative AI

In the coming years, it is predicted that there will be no company left unaffected by productive AI, but the retail, consumer products, banking, pharmaceutical and medical product sectors will see the biggest gains. While the technology will impact most business functions, 75% of the total gains it can deliver will likely be generated by marketing and sales, customer operations, software engineering and R&D departments. Manufacturing-based sectors, which rely heavily on physical labor, are not expected to be affected in the short term.

According to a recent report by consulting firm Gartner on emerging technologies and trends, 30% of marketing messages of large organizations will be generated by Generative AI by 2025. Today, this figure is estimated to be around 2%. By the same date, Generative AI will be used in 30% of all product development processes.

By 2028, there is expected to be a 50% increase in the energy efficiency of AI model training processes. This improvement will reduce the environmental and financial costs of AI development.

By 2030, 50% of all schools worldwide will be using productive AI. In sectors such as education, where personalization is at the forefront, technology will make its mark.

As we move beyond GPT-4, text-based models will be better able to analyze human psychology and creative processes and generate content accordingly. Models like AutoGPT will evolve to allow text-based AI applications to write their own prompts and complete more complex tasks.

Already, there are companies that are adapting these developments to their business processes very quickly. For example, UK-based energy provider Octopus Energy says that 44% of all customer emails are now written by AI. Thanks to AI, the number of companies stating that they have reduced the software work that would normally take 8-10 weeks to just a few days is not few. Assuming that we are only at the beginning, it is not hard to imagine what kind of future awaits us.

In the 2004 movie I, Robot, Will Smith's character asks the robot in front of him: "Can a robot write a symphony? Can a robot turn a canvas into a masterpiece?"

In the movie, our robot answered this question with "What about you?". However, if the movie had been made within the last few years, we guess the answer to this question would have changed to "Yes, what about you?".

Generative AI and Ethics

As with any new technology, productive AI holds great potential and hope for change, but it also brings with it a number of concerns.

The first problem we face with AI today is that we sometimes encounter manipulative and misleading content. Therefore, AI-generated content, at least for now, needs to be seriously evaluated before it is used.

There are general concerns about who bears responsibility for AI-generated content and accountability for how it is produced.

Content created by generative AI is already raising copyright issues. In cases where a model creatively produces artwork, it can become unclear who owns the copyright to the works it "inspired".

In particular, models trained with internet data, such as GPT-4, can learn about prejudices and inequalities in society and reflect them in the content they produce.

As generative AI systems evolve and become more autonomous, it can also lead to concerns about losing control over how these systems operate.

However, as with other technologies in the past, we have the potential as a society and industry to overcome these challenges. In this process, companies in particular have a big job to do in terms of the quality of model training data and making algorithms free of bias.

Sources:

https://www.sequoiacap.com/article/generative-ai-a-creative-new-world/

https://lifearchitect.ai/models/

https://sitn.hms.harvard.edu/flash/2017/history-artificial-intelligence/

https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/technologys-generational-moment-with-generative-ai-a-cio-and-cto-guide

https://www.gartner.com/en/articles/beyond-chatgpt-the-future-of-generative-ai-for-enterprises

https://news.stanford.edu/2019/02/28/ancient-myths-reveal-early-fantasies-artificial-life/

https://sitn.hms.harvard.edu/flash/2017/history-artificial-intelligence/

https://www.gartner.com/en/articles/5-impactful-technologies-from-the-gartner-emerging-technologies-and-trends-impact-radar-for-2022

https://developers.google.com/machine-learning/gan/gan_structure

https://www.altexsoft.com/blog/generative-ai/

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023-generative-ais-breakout-year

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023-generative-ais-breakout-year